robots.txt as a security measure..?

Lately I've been looking at the usage of the robots.txt "standard" (now proposed standard, https://webmasters.googleblog.com/2019/07/rep-id.html, https://www.feedhall.com/Documentation/robots-txt) which is a file used for communication between site owners and crawlers.

The robots.txt file is a file whose purpose is to inform browsers about how their site is structured and what content may or may not be fetched. It may also include for instance sitemap links, but the general purpose is to allow or disallow paths for robots (crawlers, spiders, bots, ...). There are different reasons why a site would like to disallow crawlers for certain paths, among others:

- Resource consumption alone

- Resource consumption relative to its value

- Don't want certain pages to be indexed (not enough though - pages can end up in an index even though it's not crawled)

- To make it easier for the crawler to find relevant pages (by avoiding search.php?term=X&page=Y etc)

The primary reason for my investigation was to see if there were any crawlers that more commonly allowed or disallowed, meaning more or less popular. After searching through over 100 million hosts searching for good/bad crawlers, I noticed a pretty widespread utilization of robots.txt as a security measure (The good/bad crawler result can be found @ https://www.feedhall.com) .

Plenty of websites are using the robots.txt exclusion protocol to exclude sensitive data from indexing. This is a very bad idea, as it makes it very easy to both find and index these paths which makes the whole idea almost counter-productive.

I stumbled over several entries like these:

User-agent: *

Disallow: /images/

Disallow: /includes/

Disallow: /modules/

Disallow: /music/

Disallow: /scripts/

Disallow: /stylesheets/

Disallow: /404.php

Disallow: /database.sqlite

Disallow: /viewdatabase.php

Disallow: /map.txt

Some returned 404's, but there's an alarmingly high amount of sites that really do leak sensitive data through robots.txt.

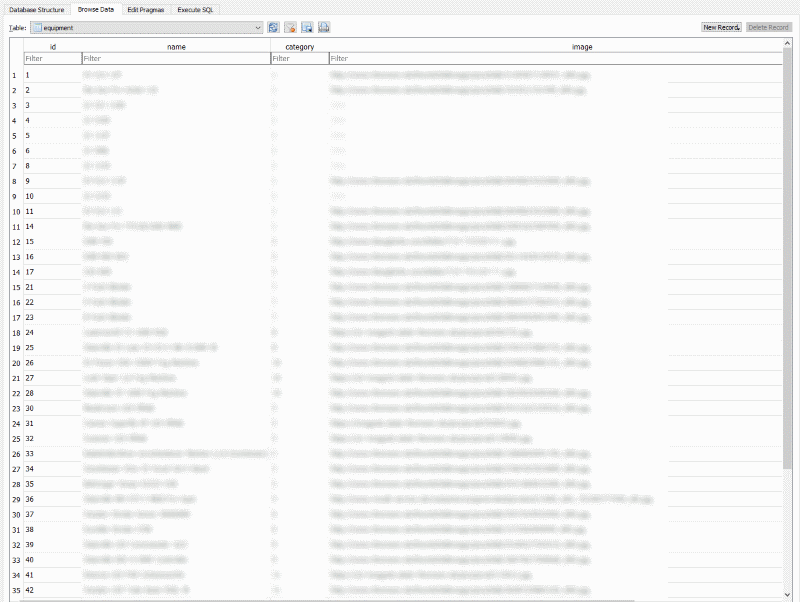

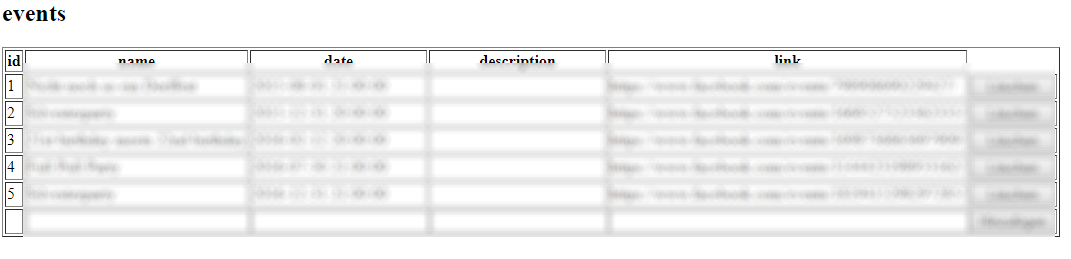

There are complete databases of websites available for download (commonly through some /backup/ -folder), but even live sqlite-databases. Some included product catalogs along with login-less administration paths:

Sqlite dump read with sqlitebrowser:

Administration interface with CRUD support:

Hiding databases, administation interfaces, nudes or any type of sensitive information through an explicit disallow-rule is really, really bad. It would even be a million times better to place the sensitive files inside /TOP_SECRET_FOLDER and disallow the entire path, avoiding to explicitly name the paths at least.

Do not use robots.txt as a way of "protecting" files / paths / ANYTHING